Traditional search can’t get any more straightforward. As soon as a user enters a query, the search engine returns results that it thinks are the most relevant. How it determines that is still largely shrouded in secrecy, though that may be for the best.

However, search engines today have moved past that thanks to AI. They won’t just provide the information the user’s looking for, but also other information that they may find helpful. Gone are the days when results were limited to the so-called “ten blue links.”

AI didn’t just change search for consumers but also for businesses and organizations. The expanded information provided by AI-generated summaries points to a key transformation: one keyword won’t cut it anymore. You don’t create content only for what consumers look for now, but also for what they might look for later.

To make this work in your favor, it pays to understand the technique responsible for making the detailed results. This is known as query fan-out, and today’s blog post is all about it.

Running Additional Searches

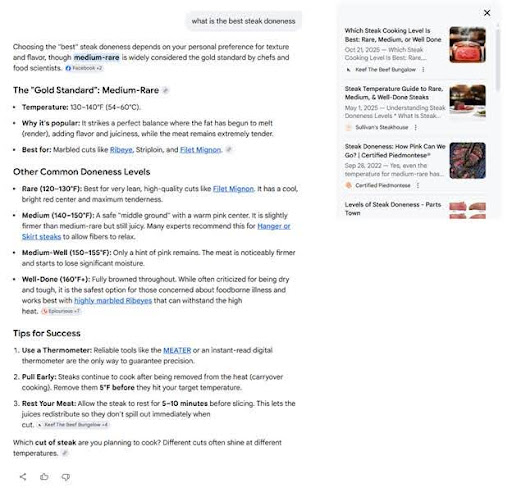

To understand how this technique works, let’s search for the best steak doneness. The one below was done using Google’s AI Mode.

Well-done ftw. Don’t judge me.

While the search gave a specific answer, it also provided further context in the form of a comparison of other doneness levels and tips for cooking steak. That isn’t what the user was looking for, but search data suggests that they tend to do follow-up searches on such topics. The handpicked list of sources on the right also supports this.

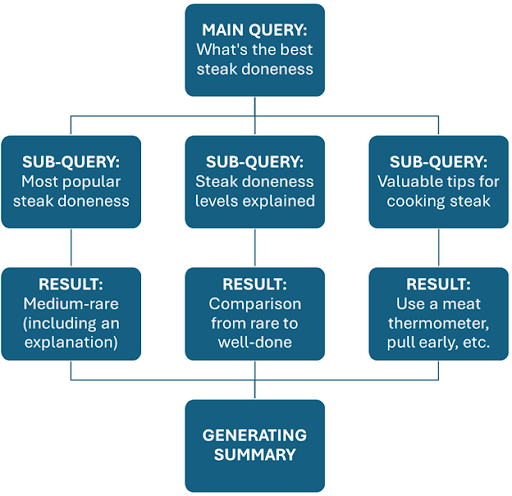

In query fan-out, the search engine breaks down the main search query into multiple sub-queries. The AI generates a response by collating relevant information from the results in each of the sub-queries. Here’s the breakdown using the example above.

All this happens within seconds, depending on how many sub-queries it needs to run. One study determined that the average fan-out extends to nearly 11 sub-queries per prompt. In a few prompts, they can have as many as 28 sub-queries.

Query fan-out is important for large language models (LLMs) for two reasons. First, as LLMs are expected to answer complex questions, this technique allows them to produce unique answers. It’s almost impossible to achieve this through traditional search, considering that returned results don’t necessarily provide the right nuance.

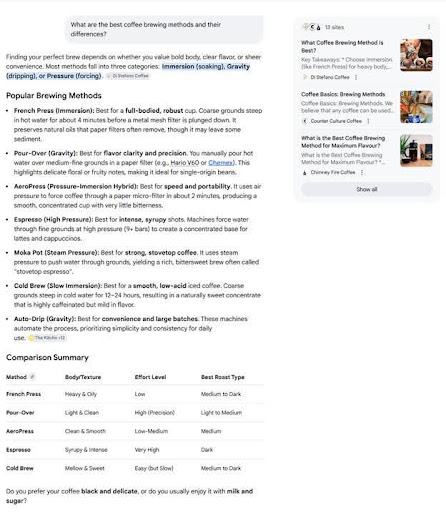

More importantly, query fan-out helps satisfy search intent better. Today’s queries possess more than one objective, as in the case of the example below.

The search engine takes it a step further by defining each brewing method’s advantages and a comparison table below. By satisfying both explicit and implicit intents, query fan-out spares users the effort of running another search.

Implications for Content Creation

It’s clear how much AI has changed the face of content over the years. Despite persistent focus on organic rankings, the playing field has since shifted to AI summaries (in Google’s case, AI Overviews or AI Mode). That’s likely to stay that way as models evolve.

Query fan-out, a staple of search LLMs, may make search more convenient for users. For businesses and organizations, however, they have their work cut out for them.

Presence Beyond the Main Query

Because query fan-out, well, “fans out” to other queries, publishers can’t confidently say they can rank for a single search term. A website’s exposure must also extend to the sub-queries, lest it risk letting its rivals dominate them.

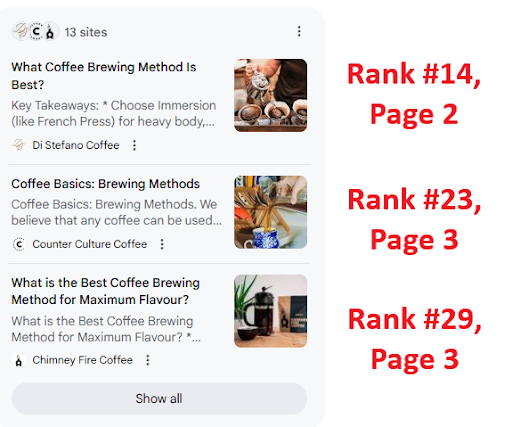

Going back to the search about coffee brewing methods, you’ll notice that AI Mode used information from 13 websites. All of them are fighting for visibility, but more importantly, visits, though one has an advantage due to the citation.

While the AI may reference your content, it won’t necessarily convert to visits if it also cites a dozen others. This fragmentation is a major downside for publishers, hence the necessity of extending a website’s presence to all sub-queries.

Topic Clusters Over One-Off Posts

If it were several years ago, writing an article or blog post about a single topic would’ve still been effective. However, query fan-out shows that’s no longer how search works, more so with AI as a key feature. Instead of individual topics, AI-powered SEO favors topic clusters.

For the record, industry experts have been stressing the importance of topic clusters long before AI. They grew in necessity as Google made its search engine to understand context more and take queries at face value less. Granted, search engines are still far from having human-level context comprehension, but AI is helping speed up their learning.

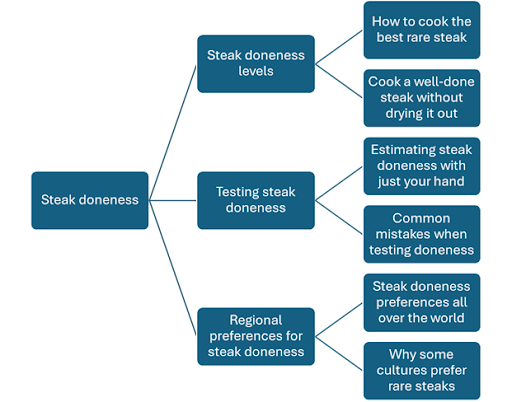

Below is a sample topic cluster for “steak doneness,” which I did via a free clustering tool.

LLMs don’t always delve too deep unless prompted, nor do they always include every piece of content in the cluster. However, they can be unpredictable in their choice of references for every request. Covering as many topics as possible increases a publisher’s chances of the model citing or even mentioning it.

Even Forgotten Content Has a Chance

Before AI in search was a thing, we were obsessed with ranking high in search results—and for good reason. People rarely bother exploring beyond one or two results pages, so having content where they’re visible is a must. Otherwise, it won’t see the light of day.

Then, AI stepped in and changed that years-old mindset.

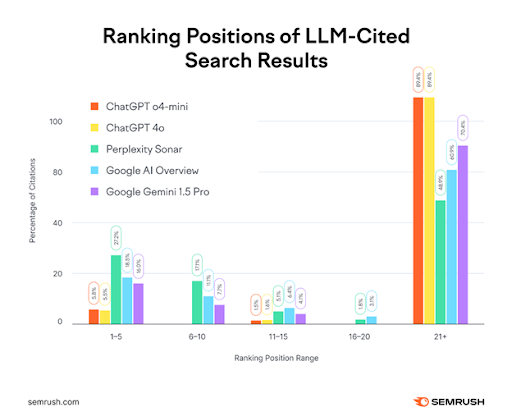

Source: SEMrush

To further prove that point, I looked up the rankings for the sources AI Mode used for the search about coffee brewing methods. The first cited source wasn’t even in the top 10.

Rankings are as of this writing.

AI cares more about relevance and less about rankings, and query fan-out is one of the key drivers of such behavior. Exploring sub-queries leads the model to more obscure content, yet believed to be the ideal source for fulfilling the user’s prompt.

The implication in this case depends on where your website stands. If SEO has been the bane of its ranking efforts, this is good news because it now has a better chance of being seen. If you’ve kept its consistently high ranking, you can’t afford to rest on your laurels because the underdogs are vying for a spot in the AI summary.

Monitoring Will Be A Pain (For Now)

Searching for popular keywords is as simple as operating tools like Ahrefs and SEMrush, which also crunch the numbers in the process. Yet, even as these platforms integrate AI, many of their metrics won’t be as effective in tracking AI citations and mentions.

It also doesn’t help that LLMs differ in how they retrieve sources and generate responses. Experts state that the models may be doing the former either through APIs or scraping, or even using either raw or synthetic data. Not to mention that prompts differ by user, which also affects responses, mentions, and citations.

The good news is that some metrics are now trackable, particularly prompts that trigger AI responses and the amount of traffic coming from AI platforms. It might not look like much, but it’s a start while the industry perfects AI response tracking.

You May Be Doing SEO Wrong

Keyword research may still have its uses in modern SEO, but it’s losing ground in the face of AI-powered search. As such, the next time you’re looking for queries, know that you’re not ranking for just a single keyword anymore.

You’re ranking for more than you think, and you’ll need as much as you can get.